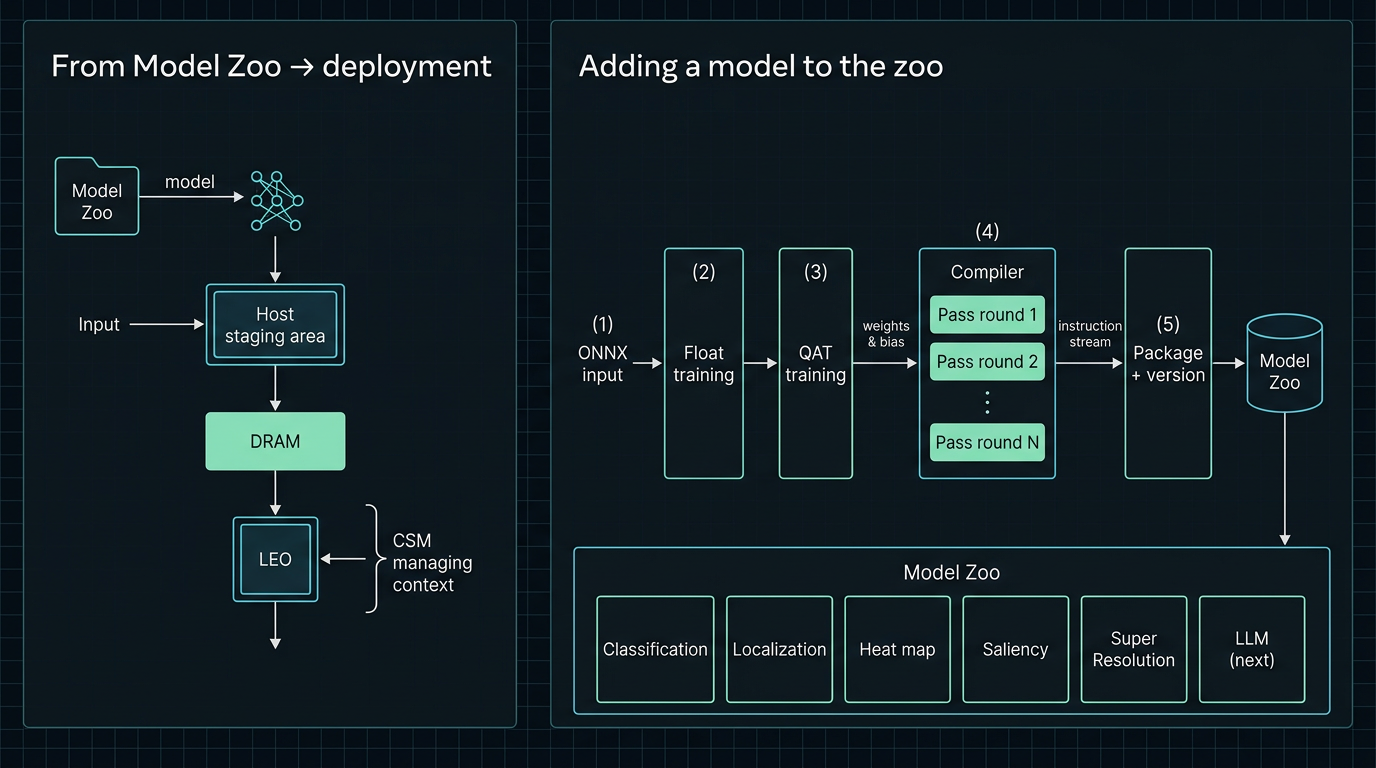

Model zoo

LeoGreenAI maintains a growing public catalog—vision, analysis, and related tasks—brought up through the production compiler to the LEO execution core on FPGA. It is your proof-of-concept and capability showcase—not the ceiling on what you can run. The catalog grows continuously; see what’s next in the roadmap below, with early access for partners and subscribers.

ModelZoo — existing classes

Classification

Image and signal classification networks for benchmarking accuracy, throughput, and CSM-visible utilization on fixed configurations.

Localization

Detection and localization-style models for studying memory traffic, tiling, and parallel instruction overlap on real hardware.

Heat map generation & saliency

Models and pipelines that produce spatial heat maps and saliency outputs—useful for visualization-heavy workloads and mixed precision studies.

Super-resolution

Reconstruction and enhancement networks included in the zoo for stress-testing bandwidth and multi-stage graphs.

Large language models (LLM)

In progress — available soon

LLM inference on the same compiler and CSM path as the vision models—listed here when it ships.

Reference models tied to industry-standard datasets—including CIFAR, COCO, ImageNet-scale benchmarks, and custom partner corpora—are available today in the catalog.

Roadmap — coming soon

The LeoGreenAI team is actively extending compiler, ISA, and FPGA support so you can do more on LEO hardware. The categories below are on the roadmap and will be added to the public ModelZoo soon—watch this space and release notes as drops ship.

Next major focus: large language models. We are prioritizing LLM and transformer inference—bringing attention-heavy workloads to the same observable, compiler-aligned stack you already trust for vision. Subscribers and research partners can expect early access as soon as builds are ready.

- Richer vision & dense prediction — segmentation-style and other dense pipelines that push memory and tiling further than classic detection.

- Video & temporal AI — clip- and stream-style models for real-world motion and time.

- Generative inference — multi-stage and diffusion-class graphs as toolchain support matures.

- Audio & speech — selective ONNX-friendly recognition and enhancement models where programs need them.

Research partnerships help us prioritize what lands first—so joint roadmaps, publications, and evaluation goals can shape the order of public releases while the zoo keeps growing for everyone.

Adding models & private zoos

Partners and customers are not limited to the public catalog. We provide a structured intake flow for bringing new models through validation and compiler bring-up—whether they should land in the shared zoo, in a private drop for your team only, or both.

- Intake — artifacts, data-handling rules, and accuracy targets captured under agreement (e.g. ONNX, checkpoints, training notes).

- Compiler & QAT alignment — graph fit, quantization-aware training or conversion steps as required for your configuration.

- Silicon bring-up — validated inference on agreed FPGA (or future silicon) builds with CSM-visible capture.

- Packaging — deliverables scoped to the public zoo, a private zoo package, or both.

For organizations that need their own portfolio on LEO hardware, we support private model zoos: you build a customer-specific set of checkpoints using our intake and training (or retraining) flow where your program requires it. LeoGreenAI supplies the tooling and engineering support to run that pipeline end to end—so your benchmarks and production candidates stay yours, while still targeting the same ISA, bitstreams, and observability as the public reference set.

The public zoo remains a shared proof point for what the stack can do; your private zoo is where proprietary graphs, datasets, and release cadence align with your product or research roadmap.

Access

Public reference packages ship with the FPGA bitstream subscription or broader partnership agreements. For intake into the public or a private zoo, a prioritized port, or an unpublished architecture, contact us with your ONNX or training artifacts and target configuration.